Managing Proxy Pools at Scale: Concurrency, TTL, and Failover Design

Scrapers and automations fail in costly, quiet ways: rising block rates, throttled sessions, or noisy retries that double your spend. When that happens, the root cause is often weak proxy pool management: over-aggressive concurrency, sticky sessions that burn out, or brittle failover. By the end, you’ll know how to design, test, and monitor pools that actually scale.

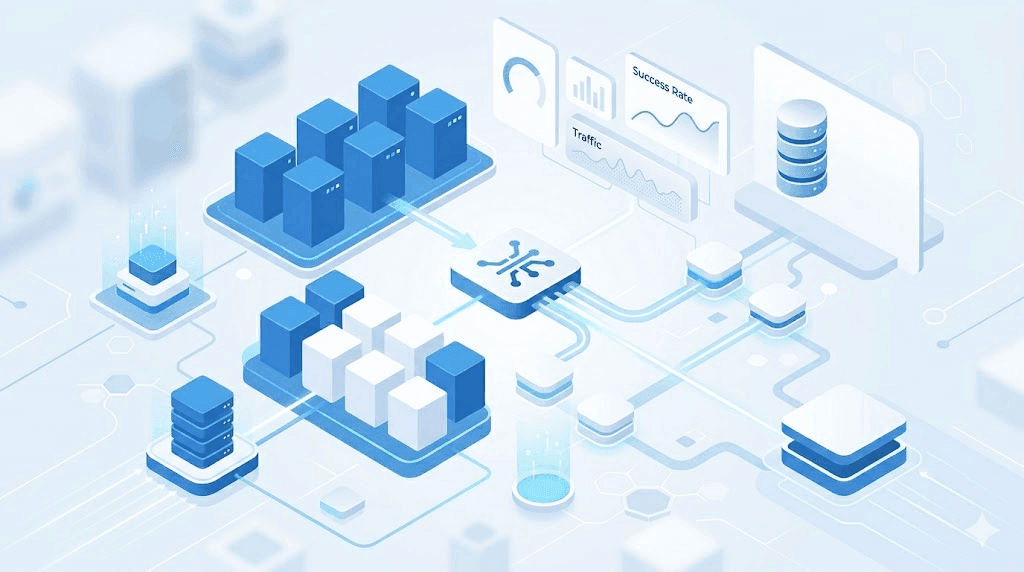

Proxy pool management is the discipline of controlling how many requests each IP carries, how long sessions live (TTL), and how quickly traffic fails over to healthy routes. Do it well and you cut block rate, increase successful requests per dollar, and reduce engineering churn. Do it poorly and the system looks busy but delivers bad data.

What is proxy pool management?

Proxy pool management coordinates IP rotation, per-target concurrency, session TTL, and failover logic to sustain high success rates under anti-bot pressure. In practice, it means setting guardrails (limits and timeouts), measuring health, and adapting traffic in near real time. It’s the backbone of reliable scraping and automation at scale.

If you’re mapping out a new program or expanding an existing one, skim common proxy use cases to anchor expectations and edge cases you’ll meet in production. See examples across pricing monitors, travel inventory, and social listening in our proxy use cases library.

Concurrency: push throughput, not your luck

Concurrency is how many in-flight requests you allow per IP, per target, or per session. Too high and you get blocks and captchas. Too low and you miss SLAs.

A good starting model:

- Cap concurrency per IP and per domain. Example targets to validate in a pilot: 1–3 concurrent requests per IP per domain.

- Use a global token bucket to shape bursts across the fleet. That prevents stampedes after retries or scheduler spikes.

- Add adaptive backoff. Increase inter-request delay on soft blocks (429/5xx), then decay back when success improves.

A simple sizing formula:

- Effective concurrency = healthy_proxies × sessions_per_proxy × concurrency_per_session.

- In plain terms: how many clean lanes you have multiplied by how many cars you let into each lane.

Validate your settings with short canary runs per target. Track success rate, median response time, and captcha incidence before you scale up.

TTL and session strategy: stick when it helps, rotate when it hurts

TTL (time to live) is how long you keep a session or IP sticky for a target. Sticky sessions help with login flows, carts, or paginated lists. Rotation helps on public pages that punish repeated hits.

Practical guidance:

- Use sticky sessions where state matters (auth, checkout, deep pagination).

- Set TTL by target risk. Example targets to validate in a pilot: 1–5 minutes for stateful flows; 10–60 seconds for public pages under moderate pressure.

- Refresh TTL on success only; expire aggressively on soft or hard blocks.

- Rotate user-agents and minimal headers with the session. Keep your fingerprint consistent within a sticky window to avoid suspicion.

Mid-article reminder: robust proxy pool management treats TTL as a control knob, not a checkbox. You will tune it per domain over time.

Failover design that actually recovers

Failover must be fast, local, and aware of error type. Blind global retries can amplify blocks and costs.

Practical failover steps:

- Classify errors quickly. 4xx from anti-bot? Switch IP and increase backoff. Connection timeouts? Try another exit in the same ASN or region. 5xx? Slow down and retry with jitter.

- Use a circuit breaker per target and per exit pool. Trip on rising failure rate or latency. When open, route to a secondary pool.

- Maintain multiple pools by geo and IP type, with warm capacity. Cold starts during incidents create more failures.

- Cache target DNS and pre-test TLS to cut handshake failures during switchover.

When you rely on speed and throughput, low-latency pools offer value. If that’s your workload, review capabilities typical of datacenter proxies and how they behave under bursty traffic.

Pool composition: pick the right IP type for the job

- Datacenter IPs: fast, cost-efficient, predictable latency. Best for public content and APIs with lenient bot controls. Watch for ASN-level blocks.

- Residential IPs: higher trust on consumer sites; better for stealth and varied geos. Expect higher costs and variable last-mile latency.

- Mobile IPs: niche use for high-friction targets; often limited throughput and higher price.

Compose your fleet around your targets:

- Start with datacenter for speed and cost. Add residential where block rate stays high after tuning concurrency and TTL.

- Keep geos close to the target’s user base. Validate geo accuracy in logs.

- Maintain separate pools per risk profile to isolate reputation.

Implementation blueprint (language-agnostic)

Below is a compact control loop to adapt load and recover from failures.

loop tick=100ms:

for target in targets:

health = metrics[target]

if health.cpsr < SLO_CPSR or health.block_rate > SLO_BLOCK:

reduce(target.global_tokens, factor=0.8)

shorten(target.ttl, floor=10s)

open_circuit_if_needed(target)

else if health.success_rate > target.prev_success:

increase(target.global_tokens, step)

for worker in idle_workers:

target = scheduler.next_target()

proxy = pool.acquire(target.geo, type=target.ip_type)

session = session_store.get_or_create(proxy, target, ttl=target.ttl)

dispatch(request, proxy, session, headers=fingerprint(session))

on_response(resp):

if is_soft_block(resp): mark_proxy(proxy, warmdown=60s); rotate_session()

if is_hard_block(resp): quarantine(proxy); escalate_ip_type()

record_metrics()

Key ideas: shape global tokens, shrink TTL under pressure, trip circuits on rising blocks, and escalate IP type only when cheaper knobs fail.

Monitoring and SLOs that matter

Track signals that tie directly to outcomes:

- CPSR (connection success rate) and HTTP success rate by target and IP type.

- Block indicators: captchas seen, 403/429 ratios, WAF challenge counts.

- Latency P50/P95, queue depth, retry percentage.

- Geo accuracy, ASN diversity, and IP reuse/burn rate.

- Session stability: average lifetime and requests per session before failure.

Alert when:

- Block rate increases > X% over N minutes (example target to validate: 20% over 10 minutes).

- CPSR drops below threshold (example: < 95% sustained for 5 minutes).

- Circuit breakers open for more than M minutes without recovery.

Two real-world scenarios

Price monitoring at 500 RPS: Datacenter pool with per-IP concurrency = 2, TTL = 30s. Under a mid-day block spike, the system cuts tokens by 30%, rotates sessions on 429s, and opens a circuit to a small residential pool for retry tier only. Block rate stabilizes in 5 minutes.

Logged-in travel scraping: Sticky sessions (TTL = 3 minutes) for account pages with cart state. Concurrency = 1 per session. The breaker trips on captcha floods, forcing rotation and a 60s cool-off per proxy. Data freshness holds, and accounts avoid lockouts.

Watch out for this

- Infinite retries on 403/429. You’ll burn IPs and inflate costs. Classify and back off.

- Single shared pool for all targets. One strict site can poison the reputation for the rest.

- Over-sticky sessions. Great for state, bad for reputation. Rotate sooner on soft blocks.

- No warm standby capacity. Failover that spins up cold pools is not failover.

- Ignoring header consistency. Change too much between requests and you look robotic; change nothing for hours and you look suspicious.

Quick decision aid: default knobs to start pilots

| Situation | Concurrency per IP | Session TTL | Failover First Step |

|---|---|---|---|

| Public catalog, moderate controls | 1–3 | 10–30s | Rotate IP, add 200–500ms jitter |

| Authenticated/cart flows | 1 | 2–5m | Keep sticky; swap IP only on hard blocks |

| High-friction target | 1 | 20–60s | Trip breaker early; escalate pool type |

Use these as example targets to validate in a pilot, then tune per domain.

Costs, compliance, and ROI

The business goal is lower cost per successful request. Track this alongside engineering effort.

Tips:

- Spend where it pays back. If datacenter with careful concurrency meets your SLA, stay there. Escalate IP type only when block-adjusted costs demand it.

- Budget time and compute for quality checks. Retrying bad data is more expensive than preventing it.

- Keep region-specific pools for data residency or contractual limits. Document which targets require user consent, robots.txt respect, or legal review.

For budgeting context and SKU planning, see our high-level plans and pricing overview and align volume tiers with your expected CPSR.

Frequently Asked Questions

How many proxies do I need for 1,000 requests per minute?

Estimate working backwards from per-IP concurrency and success rate. If you run 2 concurrent requests per IP and expect 90% success, start around 600–700 IPs, then tune down as you raise CPSR. Validate with a 10–15 minute pilot per target.

What TTL should I use for login-required scraping?

Keep sessions sticky long enough to avoid re-auth flows, often 2–5 minutes. Shorten TTL on signs of pressure (captcha, 429s), and refresh only on successful requests. Treat each domain separately and tune over time.

Should I mix datacenter and residential proxies in one pool?

Keep them as separate pools tied to failover tiers. Route baseline traffic to the cost-effective pool (often datacenter) and reserve residential for retries or high-friction paths. This isolates reputation and clarifies spend.

How do I detect when to trip a circuit breaker?

Use rolling windows per target. Trip if CPSR drops below a threshold or if block rate spikes beyond your tolerance for N minutes. Add a half-open state to test recovery with small traffic before fully closing.

Why do I still see captchas after rotating IPs?

You may be reusing the same ASN, carrying aggressive headers, or hitting target-side rate limits. Randomize honest browser headers per session, add jitter between requests, and increase ASN diversity. Check whether your proxies share subnets that the target already rates as risky.

What metrics prove my changes improved reliability?

Look for higher CPSR, lower block rate, and a drop in retries per success. Latency p95 should stabilize or fall. Most telling is cost per successful request, which should trend down after tuning.

How do I keep compliance risk under control?

Maintain target-level policies for consent, terms, and data categories. Log geo and IP type used per request. Limit scraping of personal data unless your legal team has reviewed the use case and controls.

Is rotating user-agents enough to avoid blocks?

No. It helps, but domains watch timing, path patterns, and error-driven retries. Pair UA rotation with per-IP concurrency caps, session TTL controls, and domain-aware backoff.

Bringing it together

Effective proxy pool management blends three control loops: tame concurrency, right-size TTL, and fail over quickly without thrashing. The tradeoff is speed versus reputation: push hard enough to meet SLAs, but rotate and cool down before you draw attention.

Next steps:

- Run a 30–60 minute pilot per domain with conservative defaults, then expand.

- Instrument CPSR, block rate, retries per success, and session lifetime by pool.

- Test breaker thresholds, TTL decay under pressure, and per-IP concurrency caps.

For deeper patterns and implementation details, explore our technical guides. With disciplined proxy pool management, you can hit throughput goals, keep data quality high, and control costs without firefighting every week.

About the author

Marcus Delgado

Marcus Delgado is a network security analyst focused on proxy protocols, authentication models, and traffic anonymization. He researches secure proxy deployment patterns and risk mitigation strategies for enterprise environments. At SquidProxies, he writes about SOCKS5 vs HTTP proxies, authentication security, and responsible proxy usage.